I’ve started a project of building a Web toolkit for DJs and in the process I’m constantly learning more about running a Web server [^1]. Being a huge data nerd one of the first things I wanted to install on the Linode VPS were Logstash and Kibana. Lots of data. Lots of pretty graphs. Drool.

[^1]: I’m a language geek. And, being a nerd and pythonist, I’m stealing my writing style from Django. How nerdy is that! In a related note, English isn’t my native language (hello from Finland!) and I know that I’m not very good at writing English. But I try. And I am a language geek.

And it was supposed to be easy.

But based on 15 years of experience, it very seldomly is.

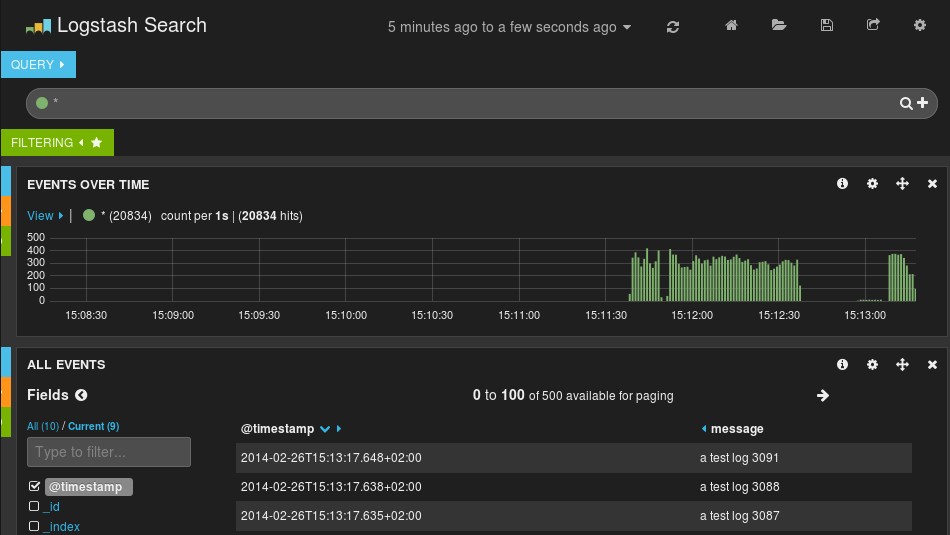

Logstash and Elasticsearch-backend installed nicely (on Ubuntu 14.04 Server) from a .deb package, Kibana was basically all-manual install from source, but it’s JavaScript and a NginX vhost is very simple to config, so it wasn’t so bad. First test inputs worked perfectly and the first little bits of data were already making the Kibana look cool. How nice!

But after adding some initial configuration files to gather logs from NginX, Apache and syslog, Logstash wouldn’t collect data when started from init script. I was dumb enough not to try running it with –verbose flag, so it was only after a couple of hours (yes) head scratching, an unhelpful visit to Logstash irc channel, and a post to google user-group and a very fast and helpful answer when I figured out that I needed to set up a SINCEDB_DIR environment variable for the script to know where to store its internal metadata. Somehow that seemed really weird problem to have for a program that had been installed as a service from a .deb package.

In an unrelated learning experience, I had hard time figuring out how the Logstash configuration files work. The documentation let me thinking that I was supposed to write one config file with input, filter and output section for every log I wanted to consume. But it turns out that while you can have multiple inputs and filters, you only should have only one common output section for all of the logfiles (if you want to collect all of the logs to one same place). I figured this out only after the data was showing multiplied in Kibana. So eventually I ended up collecting all of the configs in one big file with huge input section covering all of the logs I wanted to parse, huge filter section matching the inputs, and one small output section that forwarded everything to the Elasticsearch backend.

But at the end of the day, I got myself an awesome, beautiful frontend to all of the server logs. And yet another a reminder that I’m not a true nerd but a wannabe.

(This post was originally published on my other blog called Spinning Code.)